How to Pick the Right Sources for Your AI Training Data

You can build the most sophisticated AI architecture in the world, but if you train it on biased, noisy, or outdated data, your model will fail. Today, the biggest bottleneck in AI development isn’t compute power but access to high-quality, legally compliant training data.

Based on our experience in AI development and consulting, we provide practical guidance on how to choose and evaluate sources of AI training data. In addition, we explore the key dimensions of data quality and explain how data providers should build and maintain their datasets to ensure stable model behavior and performance.

Key takeaways

- First-party data gives you full control and strong relevance to your product, but it’s limited in scale and diversity.

- Public datasets are easily accessible and useful for experimentation, but they may be inconsistent, outdated, or poorly documented, with unclear licensing and uneven data quality across sources.

- Licensed third-party data typically offers higher quality, better structure, GDPR compliance, and clear usage rights, making it suitable for commercial AI models, but it’s not free.

- Web-collected data provides large-scale, real-world diversity, but it introduces significant legal, ethical, and technical challenges, including copyright risks, privacy concerns, and the need for extensive cleaning and preprocessing.

- Synthetic data is valuable when real-world data is scarce, sensitive, or expensive to obtain, but it may lack real-world complexity and shouldn’t fully replace real data in human-centric domains such as customer service or sales.

- Evaluating AI training data sources is essential regardless of origin. Companies should review data quality, documentation, legal compliance, and long-term scalability to ensure that datasets remain reliable, representative, and suitable for AI model development over time.

What are the key criteria for high-quality AI training data?

Let’s explore the key dimensions of data quality that contribute to model performance and reliability:

1. Relevance to the task

Start by defining the specific problems the AI model is intended to solve, then ensure each feature and example in the dataset is relevant to that purpose.

2. Accuracy

Labels and features must accurately represent real-world entities. It’s also useful to evaluate how label noise and proxy variables (e.g., a job title used as a proxy for income level) may affect model training.

3. Completeness

The dataset needs to cover the full range of cases the model is expected to encounter, including typical scenarios, edge cases, rare events, and underrepresented groups.

4. Timeliness

Data must be up to date and reflect current real-world conditions.

5. Consistency

Data must follow consistent standards and formats across the dataset.

Common sources of AI training data (and their pros and cons)

Understanding the various sources of AI training data is crucial for selecting data that is appropriate for a specific task. Each source has its own strengths, limitations, and legal considerations that can affect a model’s effectiveness and reliability.

| Source | Data quality | Legal risk | Cost | Real-world relevance | Best for |

|---|---|---|---|---|---|

| First-party data | High (domain-specific, controlled) | Low | Low–Medium | High (but limited scope) | Internal use cases, personalization, proprietary knowledge |

| Public datasets | Medium (varies by source) | Medium–High | Free | Medium (can be outdated or biased) | Prototyping, experimentation, benchmarking |

| Licensed third-party data | High (clean, well-documented) | Low | High | High (broad, up-to-date) | Production models, scaling, reducing legal risk |

| Web-collected data | Low–Medium (noisy, unstructured) | High | Low–Medium | High (raw real-world signals) | Large-scale data collection, trend discovery |

| Synthetic data | Medium (depends on generation quality) | Low | Medium | Low–Medium (lacks real-world nuance) | Simulations, rare/unsafe scenarios, filling data gaps |

1. First-party data

First-party data is collected, owned, and controlled directly by an organization. It is sourced internally through its products, services, operations, or user interactions.

Examples of first-party data include:

- Customer behavior and feedback (reviews, support tickets, post-call surveys);

- Product interaction data (feature usage, navigation paths);

- Transaction logs (purchases, payments, subscription logs, order placements);

- Company’s knowledge bases, manuals, and research data.

Internal data is often used to address a company’s internal needs. For example, YouTube and Facebook rely on behavioral data from their users to train content recommendation algorithms.

First-party data is limited in scale and diversity because it only reflects information related to your own product or service, its usage, and interactions. It captures internal knowledge and the specific relationships between your company and your customers, creating a “bubble” with its own vocabulary and challenges unique to your product.

Relying solely on internal data and ignoring external behaviors can be risky when designing AI solutions for new customer segments, scaling to new markets or regions, or comparing performance against industry benchmarks.

Pros

- Systems trained on internal data can better respond to prompts or questions regarding proprietary content or knowledge.

- You have complete control over the data – how it’s used, where it’s stored, and who can access it.

- You don’t need to worry about violating copyright or data usage terms.

Cons

- First-party data is limited in scale and diversity.

2. Public datasets for machine learning

Public datasets are open to everyone and are often instantly available for use and experimentation.

Some widely trusted sources include government portals such as data.gov (U.S.) and data.europa.eu (EU). These platforms host large catalogs of open data provided by government institutions and international organizations. They typically offer clear licensing terms and span a wide range of categories, such as agriculture, economy and finance, and science and technology.

Another well-established source is the UC Irvine Machine Learning Repository, funded by the National Science Foundation. It contains datasets across subject areas such as business, computer science, healthcare, social sciences, and games.

There are also community-driven public dataset platforms such as Kaggle and Hugging Face.

Kaggle allows users to filter datasets by file type, license, tags, and usability rating. It also provides interactive dataset notebooks, often created by dataset authors or other community members. These notebooks help users explore real usage examples, understand potential limitations, and evaluate data quality.

One major drawback of Kaggle datasets is that they may lack detailed descriptions, be outdated, or miss clear licensing and source information, as well as important metadata such as coverage or collection methodology.

Hugging Face datasets offer ready-to-use code examples and advanced filtering options by file format, modality, language, and license. The platform also allows users to filter datasets by specific tasks, such as computer vision, natural language processing, audio processing, or tabular classification. Similarly to Kaggle, Hugging Face datasets may lack licensing details, source information, or metadata on coverage and collection.

Pros

- Public datasets are often instantly available for use and experimentation.

- Some public repositories offer community discussions, code examples, and documented pitfalls.

- Many public dataset portals provide filtering by subject area, data type, file format, licenses, and intended tasks these datasets are suitable for.

Cons

- Community-driven portals such as Kaggle and Hugging Face may contain datasets that lack licensing information, metadata, or source details.

- Data can be outdated or inconsistently updated, and some datasets may overrepresent certain regions or groups.

3. Licensed third-party data

Licensed third-party data refers to data purchased from commercial providers, often derived from real-world user activity (transactions, reviews, surveys, and browsing behavior).

Compared to publicly available data, licensed datasets more often than not comply with laws and regulations governing data usage. They also include more detailed and up-to-date information on demographics or industry-specific behavior that can’t be found in open sources and is difficult or expensive to gather on your own.

For example, WiserBrand supplies datasets containing comprehensive, up-to-date information on real-world consumer interactions, such as customer reviews and support call recordings, which are particularly valuable for training customer experience and sentiment-analysis models.

When you buy AI training data from licensed providers, make sure the agreement includes clear assurances that they have the right to provide it – and, ideally, a clause requiring them to cover your costs if someone else later claims the data was used without permission.

With these protections in place, much of the legal risk can be transferred to the vendor.

Pros

- Organic, real-world data.

- Licensed datasets are typically cleaned, standardized, carefully labeled, and well-documented.

- You receive clear rights to use the data for training artificial intelligence.

- They often provide more detailed and up-to-date information than open sources.

Cons

- Licensed data isn’t free.

4. Web-collected data

Web-collected data refers to data gathered from websites, forums, or social media platforms, typically using automated web scraping tools. These tools download web pages in HTML format and convert them into structured formats, such as spreadsheets or databases.

Companies collecting data for AI training may scrape product reviews, social media posts, news articles, and more from the web.

However, this approach raises legal and ethical concerns. Although data published online is publicly accessible, it’s often protected by copyright. Using such data for commercial AI models without permission may expose a company to liability for copyright infringement.

Moreover, collecting personal data from social media or public forums requires a legitimate interest and a “three-part test” under regulations such as the GDPR. This test involves identifying a legitimate interest, verifying that the use of personal data is necessary to achieve it, and confirming that the individual’s rights don’t override that interest.

Web data is often unstructured and contains significant noise. To make it suitable for AI training, it must be cleaned of irrelevant content, such as ads, repetitive page elements (navigation menus, footers), and SEO-driven material that contributes nothing meaningful to AI training data.

Automated web scraping may also face technical restrictions, as many websites prohibit automated data extraction in their robots.txt files. Even where scraping is not explicitly prohibited, rights holders may still bring claims for copyright infringement or privacy violations.

Pros

- Organic, real-world data.

Cons

- Use in commercial AI models may violate data protection laws (GDPR, CCPA) and copyright.

- Data is unstructured and noisy, requiring extensive cleaning.

5. Synthetic data

Also known as artificial data, synthetic data is created using generative AI tools, computer simulations, or statistical methods.

Synthetic data mimics real-world data and is often used in fields where original data is difficult to obtain or restricted by data protection regulations. For example, in fields such as autonomous driving and robotics, collecting a sufficient number of crash and failure scenarios is often dangerous or economically unfeasible, so simulations are used to fill these gaps.

While its use is justified in robotics, healthcare, and industrial inspection, synthetic data is less suitable for human-centric domains such as customer service (chatbots, call analysis, etc.), as it lacks the complexity and nuance of real-world interactions. More specifically, models trained on such data may struggle to interpret subtle human cues such as sarcasm, hesitation, or emotional shifts in speech, and may also fail to reflect current language patterns and customer concerns.

Furthermore, using synthetic data to train artificial intelligence models without periodically synchronizing with underlying real-world data can lead to a decline in model quality. This occurs because synthetic data may lack the diversity and feature distribution found in real-world data, and it can also amplify existing imperfections.

Pros

- Synthetic data is invaluable when real-world data is difficult or impossible to obtain.

Cons

- Synthetic data may lack the complexity and nuance of real-world data.

- Without periodic synchronization with real-world data, synthetic data can lead to AI model degradation.

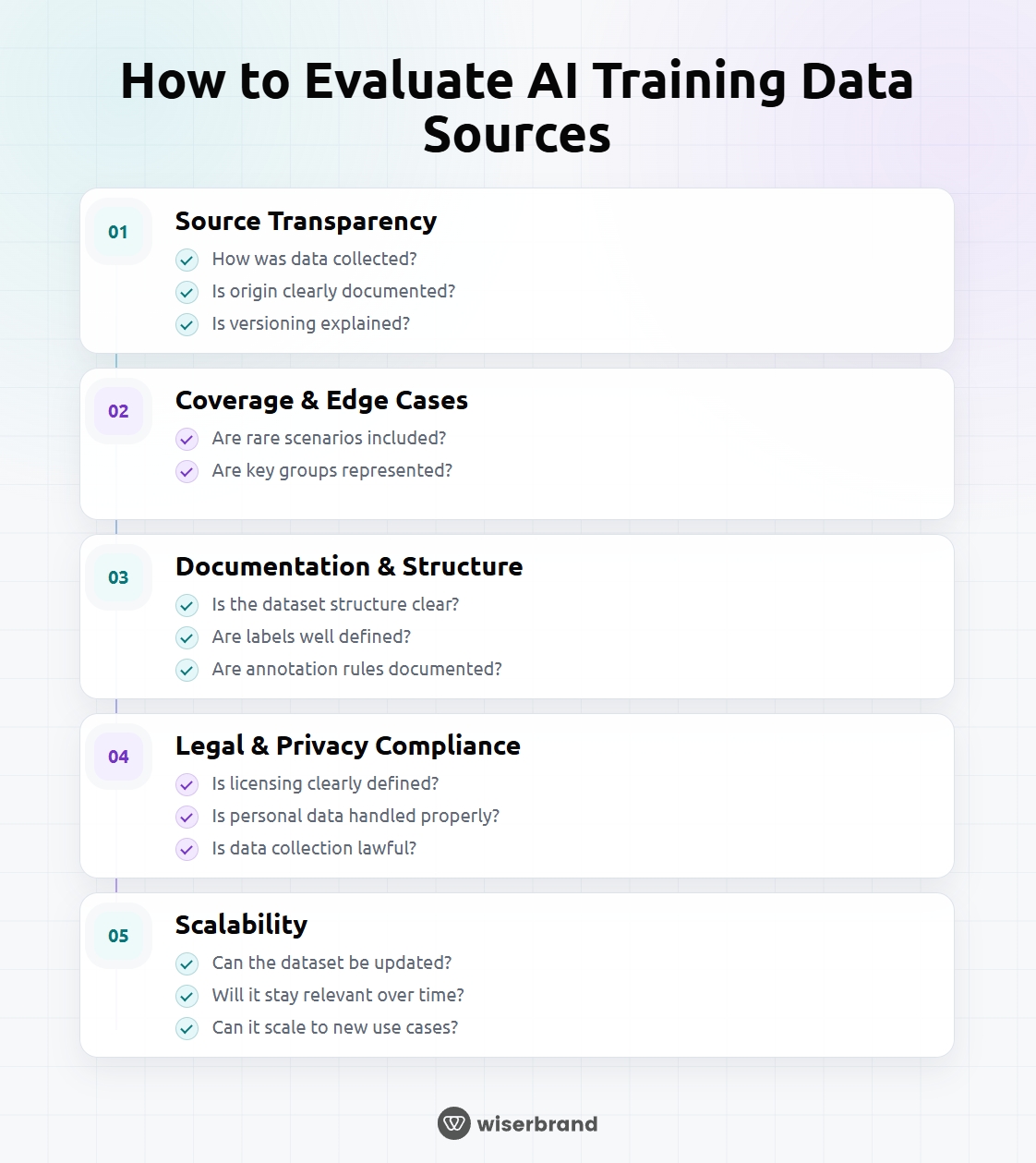

How to evaluate AI training data sources

Not all data sources used for training artificial intelligence are equally reliable or suitable for model development. Before using a dataset, it is crucial to evaluate its quality, completeness, and compliance with legal requirements, as well as whether the data source is suitable for your current needs and can support future expansion of the model.

1. Data collection methods and source transparency

When datasets have clear documentation and transparent collection methods, they are easier to evaluate for potential bias, verify for legal compliance, and update over time.

Check whether the source explains how the data was collected: manually, through automated scraping, via crowdsourcing, or generated synthetically.

The dataset should also clearly explain the origin of the data, including details such as:

- where the raw data comes from;

- the time range covered by the data;

- whether the data has been modified or labeled.

You can also check whether the dataset documentation describes processes for updates, corrections, and versioning.

2. Typical scenarios and representation of edge cases

Check whether the dataset includes both typical and rare scenarios, and whether certain groups, behaviors, or events necessary for your model are present.

A model that works well only in “normal” scenarios may appear effective in tests but fail at the slightest deviation in real-world use. Including edge cases helps ensure the model can generalize beyond typical scenarios.

What counts as an edge case depends on the type of AI system. For example, for natural language processing models, edge cases may include strong accents, local slang, speech errors or hesitations, and background noise in audio. For autonomous vehicle training models, edge cases are unusual weather conditions (heavy rain or blinding sun), irregular road signs, temporary detours, or objects resembling pedestrians (e.g., images of people on billboards).

To check whether a dataset contains edge cases, companies first need to identify the important scenarios their AI system is expected to handle.

They can then examine whether the dataset contains examples of each scenario. This is often done through statistical analysis, including checks such as:

- class distribution (how often each label appears);

- geographic or demographic distribution;

- frequency of rare events.

3. Dataset documentation and usability

To correctly interpret a dataset and avoid errors during model training, ensure that the accompanying documentation clearly explains the following:

- the dataset structure (file formats and data fields);

- label definitions;

- annotation rules.

Well-documented datasets also include examples of use and code snippets that demonstrate how to load and use the dataset in code.

4. Legal, privacy, and consent considerations

Here are the key legal considerations for companies when collecting data internally, purchasing it from vendors, or downloading it from public sources:

Copyright and intellectual property

Collecting data to train AI may result in copyright infringement, especially when the content is used for commercial purposes. However, some uses may fall under “fair use” (for example, transformative use or educational research). Purchasing training data from an external provider that clearly warrants it has the right to provide the data can help reduce the risk of copyright violations.

Data privacy

When using internal company data, processing personal data of customers or employees requires a consent and adherence to data minimization principles, meaning the collection should be limited to what is directly necessary for training AI systems.

Third-party vendors may have already removed personally identifiable information (PII) from their datasets. However, when purchasing licensed data, you should require the vendor to confirm that the data was collected lawfully and that they have the right to share it. You can also request documentation describing how the data was sourced. Finally, ensure the agreement includes clauses that protect you if their claims turn out to be false.

You have significantly less control over public data, particularly when it doesn’t come from official government portals. Datasets from online platforms are generally more difficult to verify in terms of licensing and terms of use. At a minimum, you should remove or mask sensitive PII, such as names, email addresses, or phone numbers.

Bias and discrimination

AI systems trained on biased data may produce discriminatory results, leading to liability under labor and civil rights laws.

However, ensuring that training data is free of bias is challenging, especially when using datasets specific to certain regions. You should review data sources to understand who is represented and who may be missing, check whether the model performs differently for different groups of people, and work to reduce any unfair disparities that are identified, while documenting these efforts.

5. Relevance over time and scalability

The effectiveness of artificial intelligence systems depends heavily on the quality and relevance of the data they are trained on. If a model is trained on outdated data, it may produce inaccurate or misleading results, failing to reflect current realities.

When choosing a data source for AI training, you should ensure that it can be regularly updated with new, relevant data over time.

Moreover, if your system expands to new regions, languages, or product lines, you may need additional data. For example, a dataset based on U.S. customer behavior may not be sufficient for other markets, so you should confirm that your data provider can supply relevant data for those regions.

What to check in AI training datasets

Whether you buy AI training data from a vendor or download it from a public source, you should evaluate it using the following quality criteria.

Data cleaning and normalization

Check whether the data has been cleaned of duplicate entries or of content that is irrelevant to your AI task. In addition, ensure the dataset is consistently formatted – for example, dates are converted to a uniform format, images are resized to a standard size, and audio files are normalized to consistent volume levels.

Annotation and labelling guidelines

Verify that clear, documented rules are used for labeling data, and that these rules are applied consistently across the dataset.

For example, when defining what counts as a “positive”, “negative”, or “mixed” customer review, labeling rules are defined by the data provider or the team responsible for data annotation. They may reflect overall sentiment (e.g., a review expressing satisfaction and praise is labeled as “positive”) or follow a more advanced aspect-based approach, using multiple labels instead of one (for example, separate labels for product quality, delivery, and customer service).

Another example is the labeling of sales calls. These can be categorized by purpose (product pitch, handling objections, closing attempt) or by outcome (meeting booked, deal closed, prospect not interested).

Data providers should clearly document the labeling rules they apply and explain how these rules support the intended use and effectiveness of the AI model.

Versioning and documentation

Data providers are responsible for versioning their datasets and documenting changes. They should create snapshots at different points in time, record metadata for each version, and clearly explain what changed between versions so that companies using the data can track its evolution.

Continuous quality assurance

Check that the provider performs regular quality checks to maintain data accuracy and consistency over time. The provider should take the following steps:

- Regularly review and validate the data to catch errors or gradual changes over time.

- Selectively check for mislabeled or corrupted data.

- Monitor for new patterns that weren’t present in earlier versions (e.g., new regulatory environments, regions or dialects, or economic shifts affecting behavior).

Wrapping up

When choosing AI training data, the most critical factor is whether the data is suitable for the model’s intended use and future scalability. High-quality datasets clearly indicate the source of the data, are fully documented, have a consistent structure, reflect real-world scenarios relevant to the problem the model is expected to solve, and comply with applicable legal and privacy requirements.

We hope this guide provides a clear understanding of what high-quality training data looks like, and a practical set of checkpoints to apply before using any dataset in model development.