Summary

Business Challenge

- Investigation, not fixing, was the bottleneck

The infrastructure spanned ~30 servers and multiple interconnected systems. Engineers spent 2–4 hours understanding incidents before they could fix anything.

- Heavy reliance on senior engineers

Investigation required deep system knowledge and was handled mostly by senior engineers, limiting scalability and slowing response during peak load.

- No consistent way to reconstruct incidents

Teams manually stitched timelines across logs with different formats and timestamps, leading to inconsistent analysis and uneven post-mortems.

What We Did

We built an AI agent that mirrors how experienced engineers investigate incidents, removing the manual work that slows them down.

1

Mapped the dependency graph

Before building the agent, we spent two months documenting how services connected, how logs were structured across systems, and where trace data lived. This became the foundation the agent uses to identify blast radius from any alert signal.

2

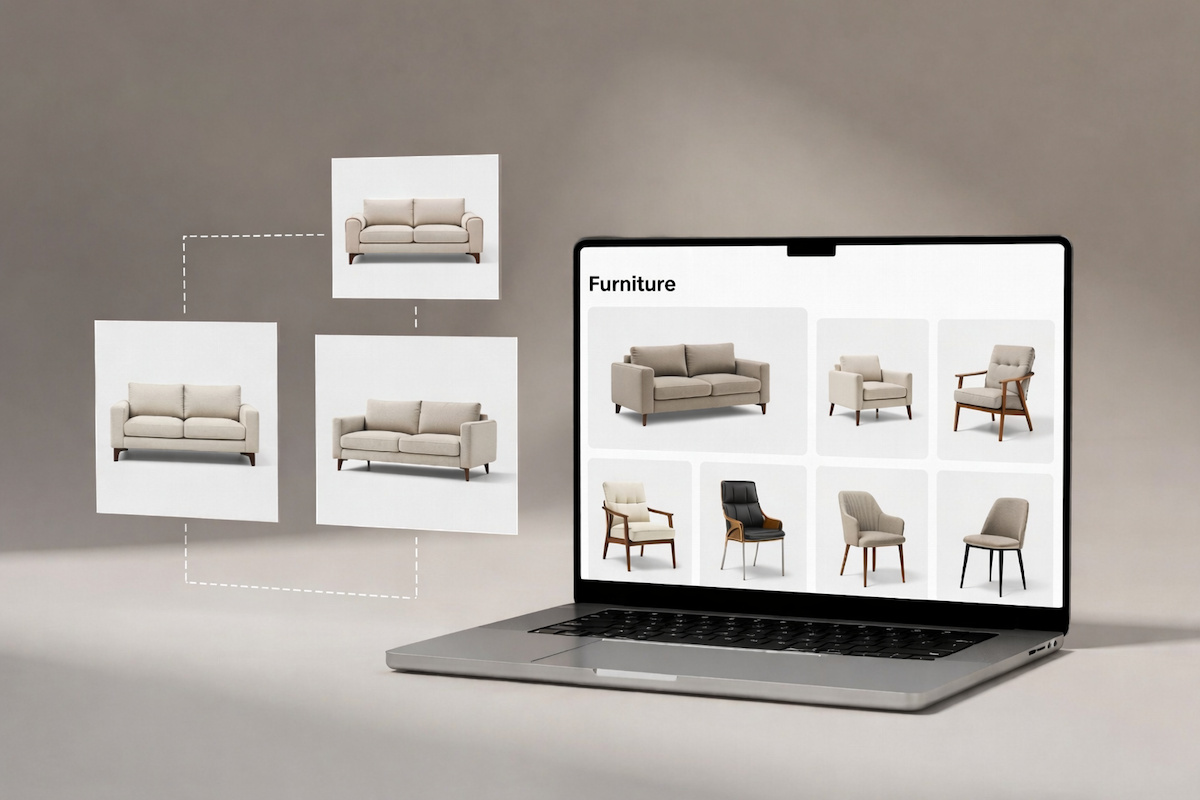

Built the multi-agent investigation core

The agent starts from an incoming alert, identifies affected services via dependency mapping, queries logs and distributed traces across systems, prioritizes signals by error rate and timing, and reconstructs the full event sequence. The reasoning loop mirrors how a senior engineer would approach the same problem manually.

3

Designed structured investigation output

Each run produces a review-ready report: probable root cause with a confidence score, cross-service event timeline, direct links to supporting logs, and suggested remediation steps. The output is built for validation, not autonomous action.

4

Tested and refined on real incidents

We used 80 hours of post-launch work to run the agent against historical incidents, identify gaps in coverage, and tune behavior for edge cases including third-party failures and incomplete trace data.